Welcome to the SassMobile

Hi I'm Tim, I hope you enjoy learning about our project as much as I did working on it!

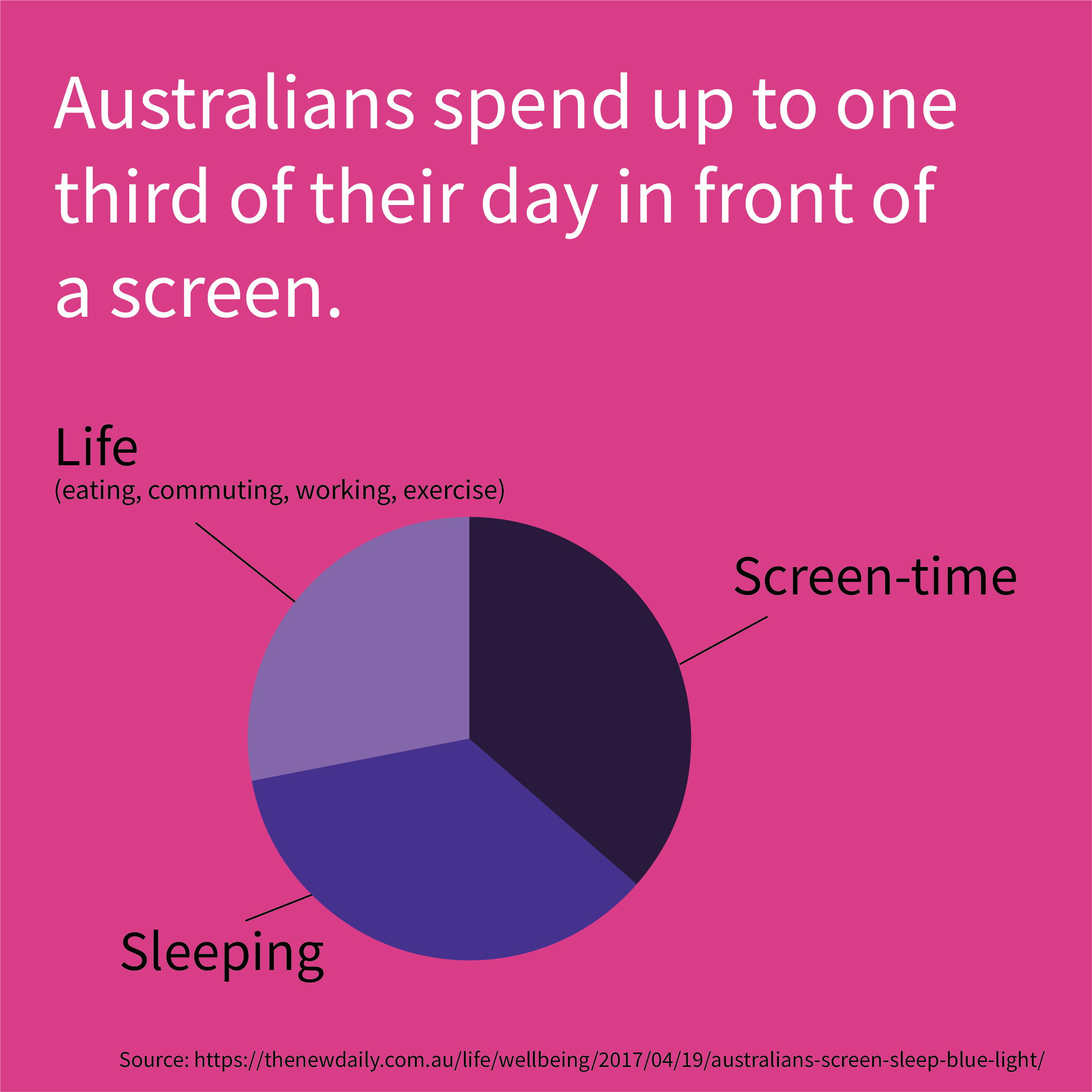

In a world where we are easily swept away by endless hours of Netflix content, or caught chasing that next laugh whilst aimlessly scrolling Facebook - who, or more specifically what will come to your rescue?

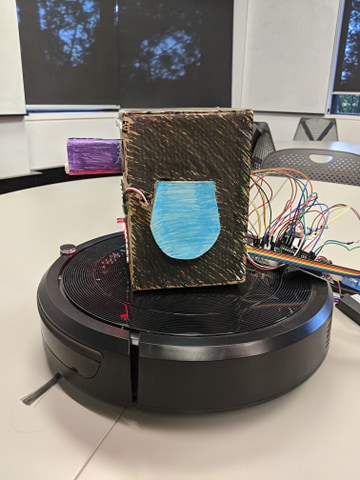

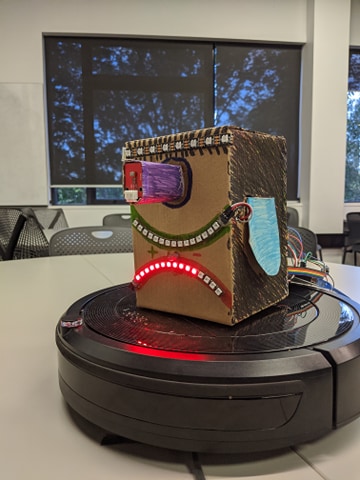

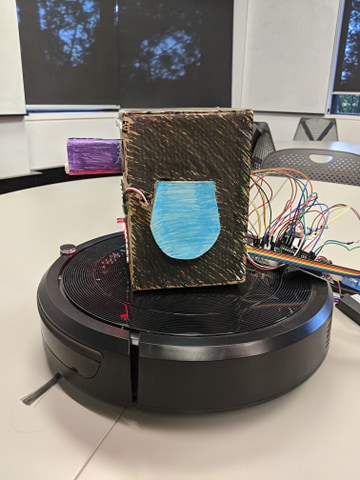

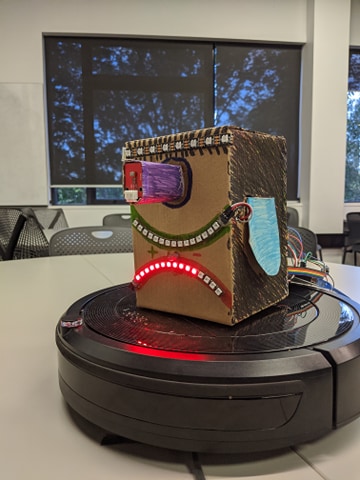

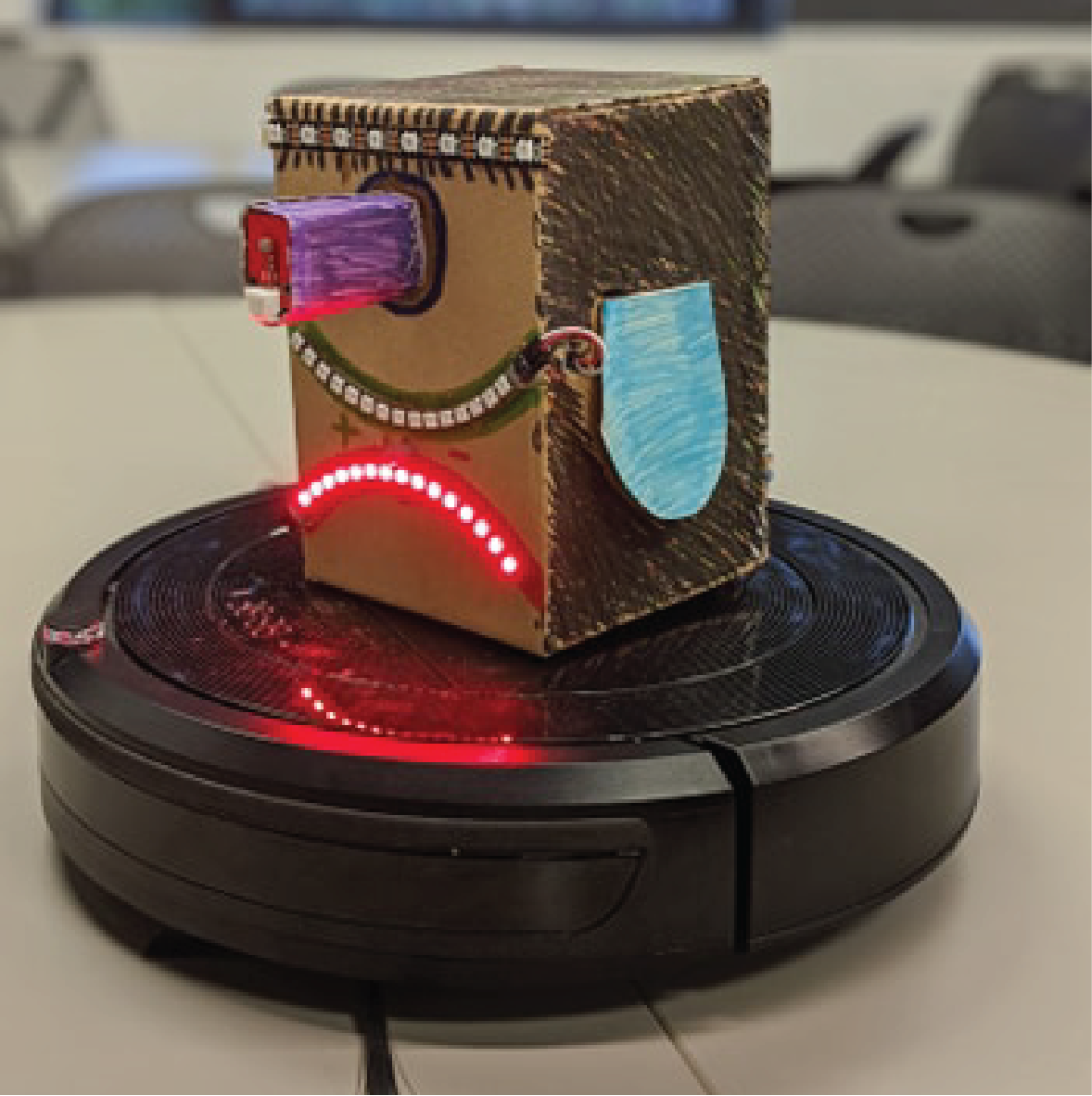

Introducing the Sassmobile! The passive-aggressive bot aims to reduce a users screen time by taking control of their gadgets if the user ignores it.

The bot uses various technologies in order to identify user behaviour and then act upon it. These include it's own touch sensors, light and movement sensors, as well as Face detection and Infrared.

Lets say you're sitting down watching some television. The SassMobile, being an inquisitive little guy moves up to you and says hello. He might then suggest "it is a beautiful day outside, why don't you go check it out?"

You might want to ignore the robot, which won't make it happy. It begins to distract you by turning up the volume on the TV. During that process, it could imply you've simply sat on the remote.

The robot will continue to pester you until it sees you move away and hopefully go outside. This is the robots main goal.

A Kickstarter style video from the Prototype demonstration phase

A team video of the robot working at the exhibition

The final product of the robot didn't manage to get all of the features as expected. Due to unforseen errors from updates, a few of the components had to be simulated for the exhibit. This will be expanded upon further in the reflection. The bot is seen to move around on top of the vacuum. The movement of the vacuum is simulated, but ideally would have been programmed through the face recognition software and ESP32. The hand lighting was working and could be controlled via touching the bot. The audio was simulated as we had audio power issues.

Anshuman is in charge of all of the touch controls and physical build associated with it. He worked on lighting reactions to twisting knobs and designing ear flaps for the bot.

Ben is in charge of anything audio. In order to have a meaningful connection with a person, the robot needs to talk and react.

I am in charge of the visual components such as controlling screens, as well as using Facial detection. I will explore this further below.

The following are the sensors that I personally interacted with in regards to the prototype.

For my portion of the project, I focussed on how to get the Robot to follow a user around as well as change TV channels. The bot uses a list of different sensors and devices for it to function properly.

Infrared (IR) sensors which can detect light invisible to the eye, and the signal TV remotes send out were vital to the project. Not only could they be used to change channels on the TV, but also used to direct the robot.

The bot also uses an ESP32 chip, which in layman terms allows for Wifi and Bluetooth connections. This was imperative as the bot used this to connect to the camera device.

The bot also uses an Android phone running Facial recognition software. This allows for the bot to face the user and TV and follow them around. It also allows for the Bot to interfere with mobile phone and computer screen interactions.

Lastly, and most importantly is the base of the bot. Sassmobile is built on top of a robotic vacuum cleaner. This allows for the bot to move around, follow people, explore, run away and bring it to life!

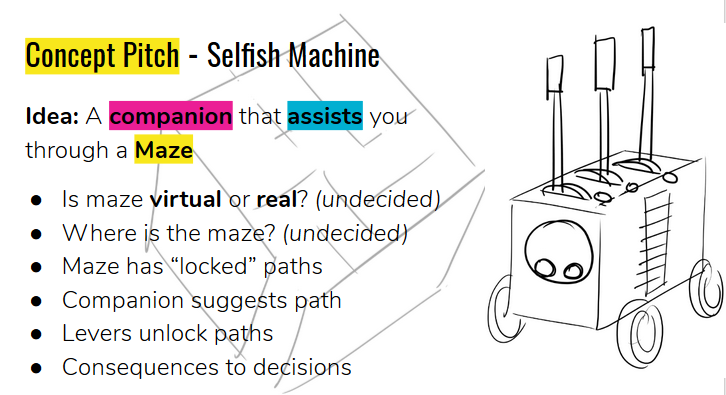

The initial inspiration for the project was a Maze assistant. We wanted something sassy, but also helpful. This was a new area of design so we struggled to fine tune our idea down.

From this point, we took away some main ideas and boiled down where we wanted to go with our project. These ideas were to have something that helps you, is sassy and is in control. Working from these core values, we found inspiration in a video showing a talking Roomba ( Robotic vacuum). This sparked us onto the track of building a bot ontop of it.

As the restrictions from coronavirus impeded on the amount of resources we had access to, we thought through the sensors we had available, and what would work to form a helpful assistant. As we learnt of TV control through IR, the idea was born of the SassMobile which helps reduce screen time.

In reflection on the course this semseter, it has certainly been an experience! Back when we began, we wouldn't have thought we would be where we are now.

In regards to the final prototype for the course, I am satisfied with the work we have completed. There were a few features which I would have loved to of seen implemented but technical issues slowed us down. These included a 3d print model of the robot as to give it a more polished finish, as well as interaction between the robot and phones.

The robot itself is advertised as an advanced species. It can identify you are watching something for a prolonged period of time, and can then make decisions based off those judgements. However due to the techinical limits of the systems used, and mix mash of different technologies, things such as turning the bot to follow a face were quite hard.

For the bot to turn, it requires a multitude of connections. These are firstly, a Bluetooth connection between the ESP32 and mobile phone device. This in itself is a buggy experience and with the current tech can't be avoided. Secondly, you then have to run the face identificaiton app on the phone and find a face. As the app is optimised for an older phone and is quite laggy, faces can be hard to detect. For the sake of the course, programming from scratch a face identification app would have been a stretch but we make do with what we can. Thirdly, when the phone finds a face, it relays the positional information back to the ESP32 which then translates that data into IR signals. The ESP32 then sends movement commands to the vacuum to turn it. As a robotic vacuum isn't designed to be used as a robot alone, factors such as vacuum battery life, turning times and speeds, as well as electromagnetic interference all play a role in the difficulties of having a robot follow you around. Nonetheless, I am satisfied with the project as a whole and have thoroughly enjoyed working with the rest of the team.

The Bat Sqwad Team on the night of exhibition.

On the night of the exhibition, everything kind of went south. The arduino application stopped working, which meant we couldn't run our ESP32 code, and thus had to simulate the turning of the robot and turning on and off of the television. Unfortunately this error came about the night before the exhibition and wasn't patched in time. Reference to the error can be found here. Aside from that, we were able to simulate the sound as well, as we found the robot wasn't able to power all of the lighting and sound at the same time, and was causing some glitching. A bit of bad luck but nonetheless a good experience at our first virtual physical exhibit.